A matrix transformation is a transformation whose rule is based on multiplication of a vector by a matrix. This type of transformation is of particular interest to us in studying linear algebra as matrix transformations are always linear transformations. Further, we can use the matrix that defines the transformation to better understand other properties of the transformation itself.

Mathematically, a transformation T is a matrix transformation if we can write for some matrix A.

[adsenseWide]

Example of a matrix transformation

Let where

.

Observations

The example above shows a matrix transformation, since T is defined through multiplying the matrix A and the input vector . To better understand this transformation, we will make a few observations.

The domain of this transformation is

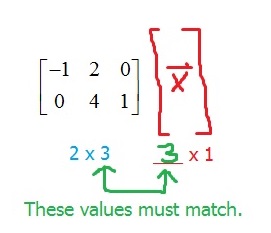

If we “plug in” a vector , we find its image through multiplying the vector by A. But, this multiplication is not always defined! This will only be defined if the number of entries in

matches the number of columns in A. This means that when we write out the size of the matrix and the size of the vector, the “inner numbers” must match.

The codomain of this transformation is

When multiplying a matrix and a vector, the result is determined by the “outer numbers” in our diagram above.

What is happening mathematically to make this true?

If we let , then:

In other words, the image of will be a linear combination of the columns of A. Since the columns each have 2 entries, we know that the columns are vectors in

and so any linear combination will also be in

. Vectors in

have dimension 2 x 1.

From these two observations, we know that:

That is, T maps vectors in to vectors in

.

To find the image of any vector, we just need to multiply

Suppose that we wanted to find the image of the vector under T. Then, we would simply “plug” this vector into T.

Since , the image of

under T is

.

T must be linear

Every matrix transformation is a linear transformation. You can review a proof of this idea here: Proof that every matrix transformation is a linear transformation